I am not receiving samples, could it be IP fragmentation?

What is IP fragmentation?

IP Fragmentation occurs when the payload provided from the transport layer (typically UDP or TCP) exceeds the maximum payload that fits in a single Ethernet Frame (a.k.a. MTU). When the receiver NIC gets IP fragments, it stores them in a buffer until all the fragments are received and can be reassembled to form UDP datagrams or TCP segments. When all the fragments are received, the reassembly is performed and the message is provided to the application layer. IP fragmentation may lead to communication issues if your system is not properly configured.

In terms of DDS applications, if you try to send a sample whose size is bigger than fits in an Ethernet Frame (MTU), you will see IP fragmentation. In the attached Wireshark capture mtu_1500_samplesize_5000.pcap, a publisher is sending a 5000 bytes sample. However, the Ethernet Frame for the sending NIC can only fit 1500 bytes, which is the standard maximum payload for Ethernet (IEEE 802.3). In the capture, you can see that packets 3, 4, 5 and 6 are IP fragments, and Wireshark shows the full payload in packet 6.

How can I know if my system is suffering from IP fragmentation?

The best way to know if your samples are being fragmented is to capture your traffic using Wireshark or tcpdump. In Linux systems, you can use netstat to gather statistics about your NIC:

> netstat -s --rawIn Windows systems, you can also use netstat:

> netstat -sAs mentioned above, the reassembly of these packets will only occur when all the fragments are received. Communication issues between DDS applications arise if:

- The buffer in the receiver side gets full with fragments and there are fragments yet to arrive. For instance, the resources allocated in Windows systems to temporary hold IP fragments are specified as a maximum number of fragments. If this value is not large enough and the cleanup timeout period is too long, the system may end up without free resources to hold new incoming IP fragments. Then, new fragments will be rejected until resources are cleaned up. If fragments in the buffer are cleaned up before a packet can be reassembled, reassembly will fail due to missing fragments. As a consequence, the sample will not be delivered to the DDS application. It is important to highlight that the amount of fragments as well as the cleanup timeout period are not configurable on Windows systems.

- A fragment is lost in the network. Since IP fragments are not DDS fragments, DDS is not able to repair them. Then, the NIC won’t be able to reassemble the packet because of the missing fragment(s). Therefore, the sample won’t be delivered to the DDS application. Or course DDS has a reliable mechanism that can repair the sample. But the repair will have to send all frames again.

- Some switches drop IP packets marked as fragments. This may happen because they are designed to do so or because they want to avoid an IP fragmentation attack.

How can I avoid IP fragmentation?

IP fragmentation can be avoided if the DDS payload (plus UDP headers) size is shorter than the Ethernet MTU. The maximum UDP payload that fits on a Ethernet MTU is 1472 bytes. This is because out of the 1500 bytes in the Ethernet MTU, 20 bytes are used by the IP header and 8 more by the UDP header. You can easily know the size of your NIC’s MTU in Linux systems with the following command:

> ifconfigIn Windows systems, the MTU for your NICs is shown by the command:

> netsh interface ipv4 show subinterfaceRTI Connext DDS provides a property to set the maximum size of an RTPS packet. This property is dds.transport.UDPv4.builtin.parent.message_size_max (described here for the current release or here for release 5.2.3), if using the UDPv4 over WAN transport the property you should use is dds.transport.UDPv4_WAN.builtin.parent.message_size_max. Setting this property to a value smaller than the maxium UDP payload that fits in the Ethernet MTU, that is, smaller than 1472 bytes, makes DDS fragment the data packets to so that each RTPS message can fit in a single Ethernet Frame. In this article, we will refer to these DDS fragments as DATA_FRAG.

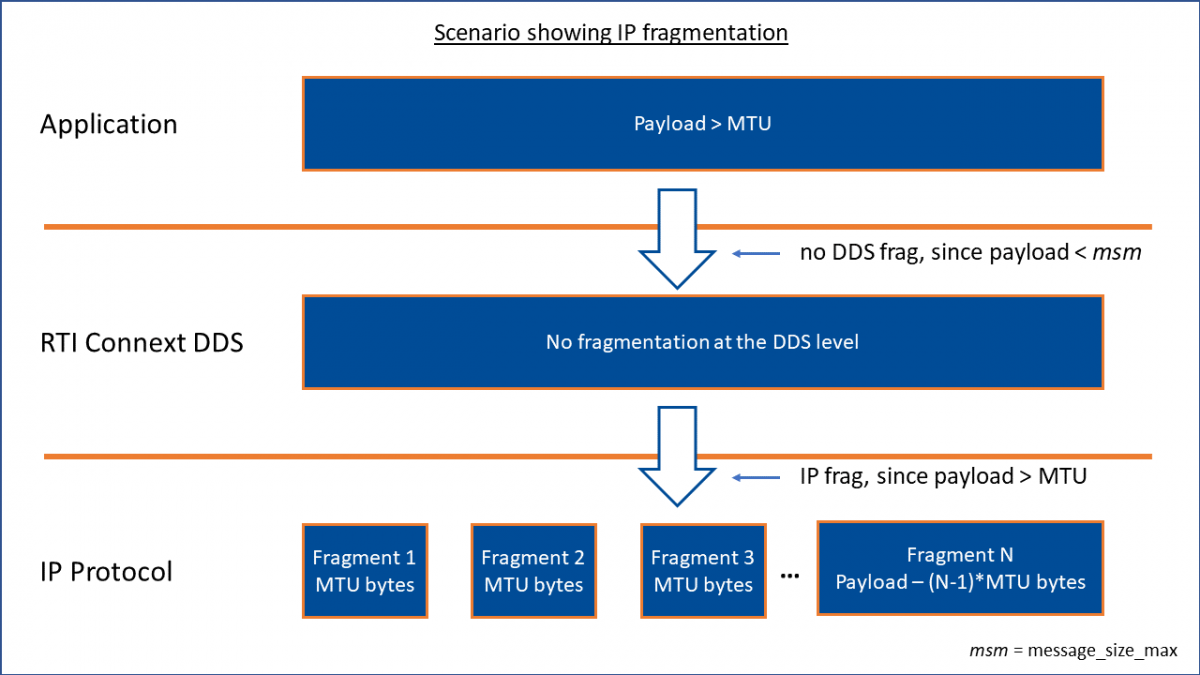

The following diagrams show the differences between IP fragmentation and DDS fragmentation. Notice that RTPS, UDP and IP headers are not shown in the diagrams in order to make the diagrams more simple.

Fig. 1. Scenario showing IP fragmentation

Fig. 2. Scenario showing DDS fragmentation

The main advantages of making DDS do the fragmentation instead of letting the UDP/IP layer do it are:

- IP packets containing DATA_FRAGs are automatically provided from the NIC’s buffer to the DDS application without having to wait for the reassembly.. This will help to prevent the overflow of the NIC’s buffer due to fragments.

- The middleware handles fragmentation and reassembly of fragments. As a consequence, when using Reliable communication, if an IP packet containing a DATA_FRAG is missed, Connext’s reliable protocol will try to repair the missing DATA_FRAG instead of the entire DDS packet. This may help reduce network traffic in scenarios with reliable communication. It is highly recommended to use Strict Reliability in this kind of scenarios.

The main cost of this approach is that having Connext handling fragmentation may introduce a performance degradation compared to an ideal case where there are no IP fragmentation issues. However, if there are IP fragmentation issues in your system, this approach will make your system work properly.

In the attached capture mtu_1500_samplesize_5000_parent_1450.pcap, you can see that packets 1-5 consist of DATA_FRAGs. These packets are DDS fragments for a sample of size 5000 bytes. These DDS fragments were fragmented according to “message_size_max” set to 1450.

Additional considerations

Note: These considerations apply only to RTI Connext 7.3.0 and below. Starting in release 7.4.0, asynchronous publishing is no longer a requirement for reliable DDS data fragmentation.

In order to fragment DDS packets, you will need to set your DataWriter’s publish mode kind to ASYNCHRONOUS_PUBLISH_MODE_QOS if you want Reliability QoS policy kind set to RELIABLE (recommended). With these settings, Connext will use a separate thread to send the fragments. This will relieve your application thread from doing the fragmentation and UDP sending work.

Notice that asynchronous publish mode needs a FlowController. If no FlowController is defined, the default FlowController will be used. With the default FlowController, the DATA_FRAGs will be written as fast as the DataWriter can, which might overload the network or the DataReaders. This link to the RTI Community forum provides an example about setting FlowControllers. An example on setting the DataWriter to be asynchronous is shown below.

Depending on the size of your TypeCode/TypeObject, you may have to set the builtin DataWriters’ publish mode to be asynchronous, too. The reason for this is that the packets containing the TypeCode and the TypeObject may be larger than “message_size_max”. Therefore, they will also need to be fragmented at the DDS level. This is done through the DiscoveryConfig QoS policy (see details in the example below). In case you are not sending the TypeCode or the TypeObject, there is no need to set asynchronous publishing for the builtin DataWriters. In case you need to send a big TypeCode/TypeObject, you can find useful information in this article.

Example

This example shows the QoS settings to:

- Set the DataWriter to be asynchronous (only required for RTI Connext 7.3.0 and below).

- Set the builtin DataWriters to be asynchronous (only required for RTI Connext 7.3.0 and below).

- Set the maximum size for RTPS packets.

<!-- Set the DataWriter to be asynchronous -->

<datawriter_qos>

<publish_mode>

<kind>ASYNCHRONOUS_PUBLISH_MODE_QOS</kind>

<flow_controller_name>DEFAULT_FLOW_CONTROLLER_NAME</flow_controller_name>

</publish_mode>

<reliability>

<kind>RELIABLE_RELIABILITY_QOS</kind>

</reliability>

</datawriter_qos>

<participant_qos>

<transport_builtin>

<mask>UDPv4</mask>

</transport_builtin>

<!-- Set the builtin DataWriters to be asynchronous

if the TypeCode/TypeObject is bigger than the MTU -->

<discovery_config>

<publication_writer_publish_mode>

<kind>ASYNCHRONOUS_PUBLISH_MODE_QOS</kind>

</publication_writer_publish_mode>

<subscription_writer_publish_mode>

<kind>ASYNCHRONOUS_PUBLISH_MODE_QOS</kind>

</subscription_writer_publish_mode>

</discovery_config>

<!-- Set this property to something lower than the MTU.

For this example, the MTU is 1500 bytes -->

<property>

<value>

<element>

<name>dds.transport.UDPv4.builtin.parent.message_size_max</name>

<value>1450</value>

</element>

</value>

</property>

<!-- If you are using the UDPv4 over WAN transport, uncomment the following property

and comment dds.transport.UDPv4.builtin.parent.message_size_max above-->

<!--<property>

<value>

<element>

<name>dds.transport.UDPv4_WAN.builtin.parent.message_size_max</name>

<value>1450</value>

</element>

</value>

</property>-->

</participant_qos>References

- Improving network performance in Linux: https://community.rti.com/howto/improve-rti-connext-dds-network-performance-linux

- Blog post talking about the timeout in Windows systems: https://blogs.technet.microsoft.com/nettracer/2010/06/03/why-doesnt-ipreassemblytimeout-registry-key-take-effect-on-windows-2000-or-later-systems/

- Microsoft KB solution regarding the maximum number of fragments: https://support.microsoft.com/en-us/kb/811003